Hello! 👋 I’m Ziming Li, currently a Ph.D. candidate in Computing and Information Sciences at Rochester Institute of Technology (RIT), advised by Dr. Roshan Peiris.

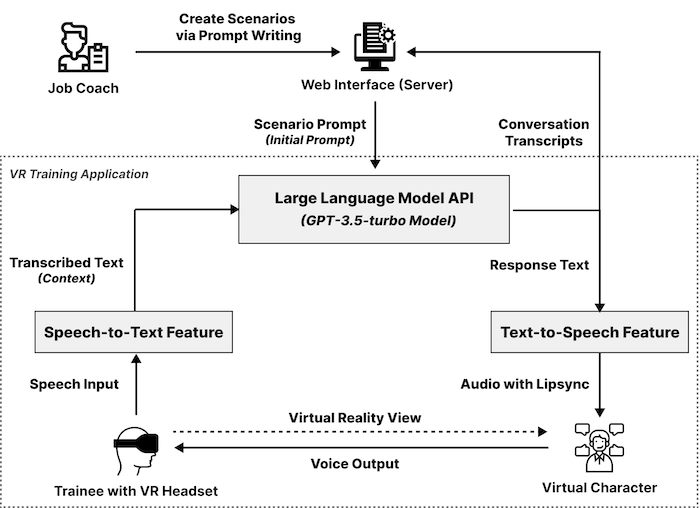

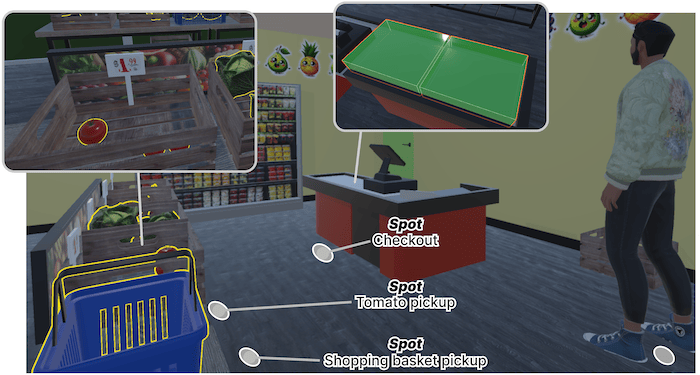

My research lies in the domains of Human-Computer Interaction (HCI), Virtual Reality (VR), and Accessibility. I focus on integrating Large Language Model (LLM)-driven agents into VR environments and exploring their potential in everyday communication scenarios and training programs, particularly for individuals with intellectual and developmental disabilities (IDDs).

I’m a member of the CAIR (Center for Accessibility and Inclusion Research) Lab and the AIR (Accessible and Immersive Realities) Lab. I am passionate about applying cutting-edge technology to develop accessible and inclusive tools, especially in VR.

Beyond academia, I’m also a web and VR/game programmer 🎮, designer 🎨, and Chinese-language writer ✍️. I enjoy crafting immersive narratives and interactive experiences.

Publications

CHI'25

Generative Role-Play Communication Training in Virtual Reality for Autistic Individuals: A Study on Job Coach Experiences in Vocational Training Programs

2025, Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems

CHI EA'25

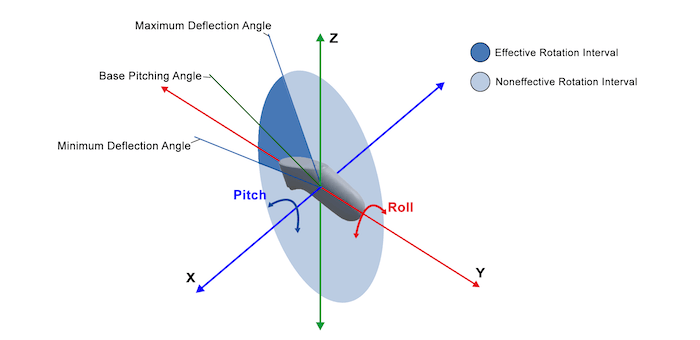

Exploring One Handed Signing During Driving for Interacting with In-vehicle Systems for Deaf and Hard of Hearing Drivers

2025, Extended Abstracts of the 2025 CHI Conference on Human Factors in Computing Systems

CHI EA'25

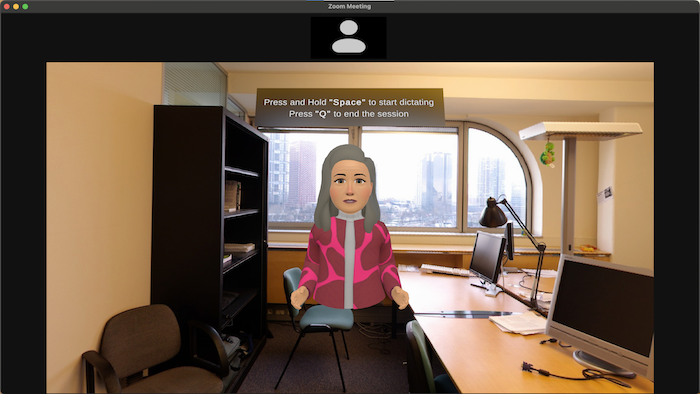

Exploring the Efficacy of a Chatbot Training Application in Alleviating Graduate Students’ Public-Speaking Anxiety During Q&A

2025, Extended Abstracts of the 2025 CHI Conference on Human Factors in Computing Systems

IEEE VR'25

Exploring Large Language Model-Driven Agents for Environment-Aware Spatial Interactions and Conversations in Virtual Reality Role-Play Scenarios

2025, IEEE conference on virtual reality and 3D user interfaces (VR)

Paper | Presentation | Video

TACCESS

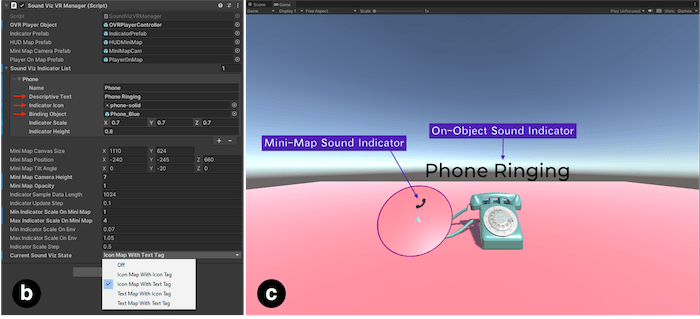

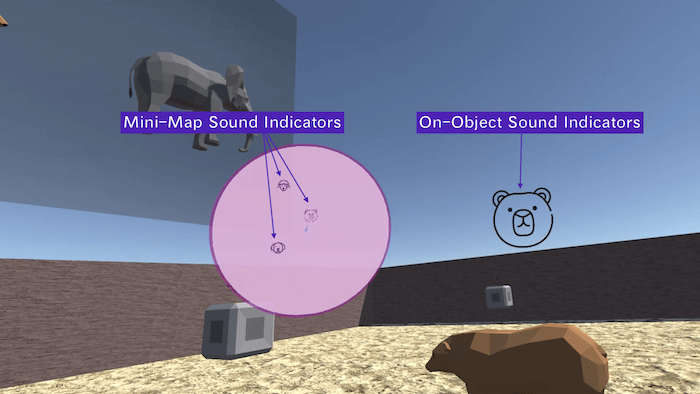

Exploring the SoundVizVR Plugin in the Development of Sound-Accessible Virtual Reality Games: Insights from Game Developers and Players

2024, ACM Transactions on Accessible Computing (TACCESS)

ASSETS'24

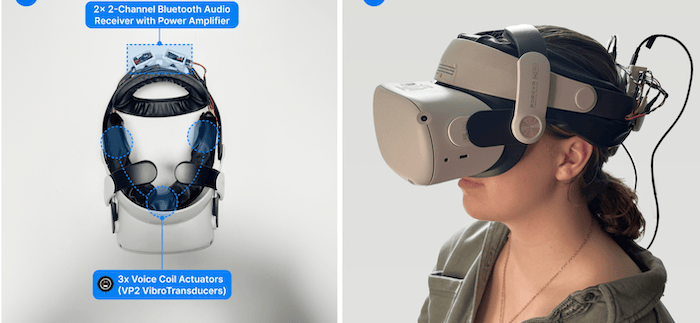

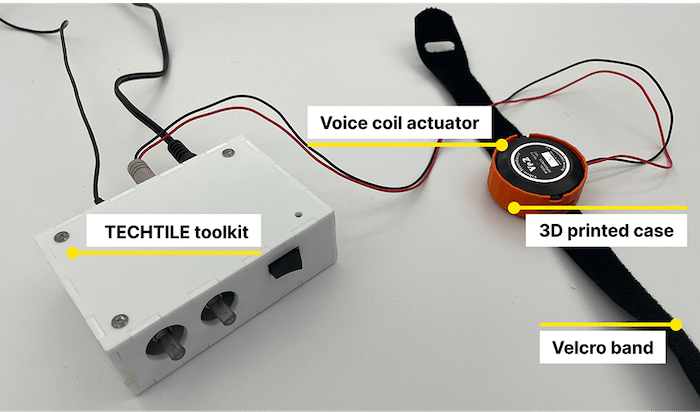

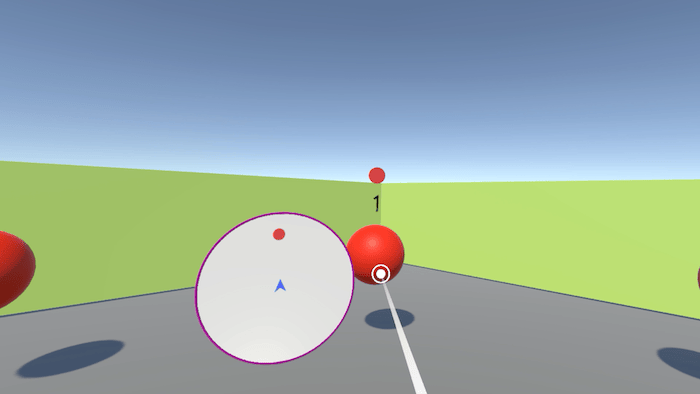

SoundHapticVR: Head-Based Spatial Haptic Feedback for Accessible Sounds in Virtual Reality for Deaf and Hard of Hearing Users

2024, 26th International ACM SIGACCESS Conference on Computers and Accessibility (ASSETS '24)

Paper | Presentation | Video

PACMHCI

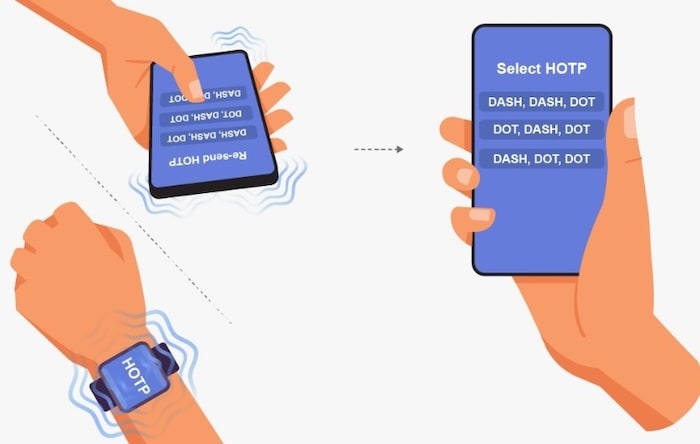

Haptic2FA: Haptics-Based Accessible Two-Factor Authentication for Blind and Low Vision People

2024, Proceedings of the ACM on Human-Computer Interaction, Volume 8, Issue MHCI

CHI EA'24

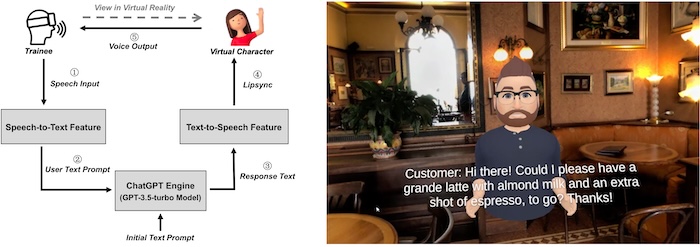

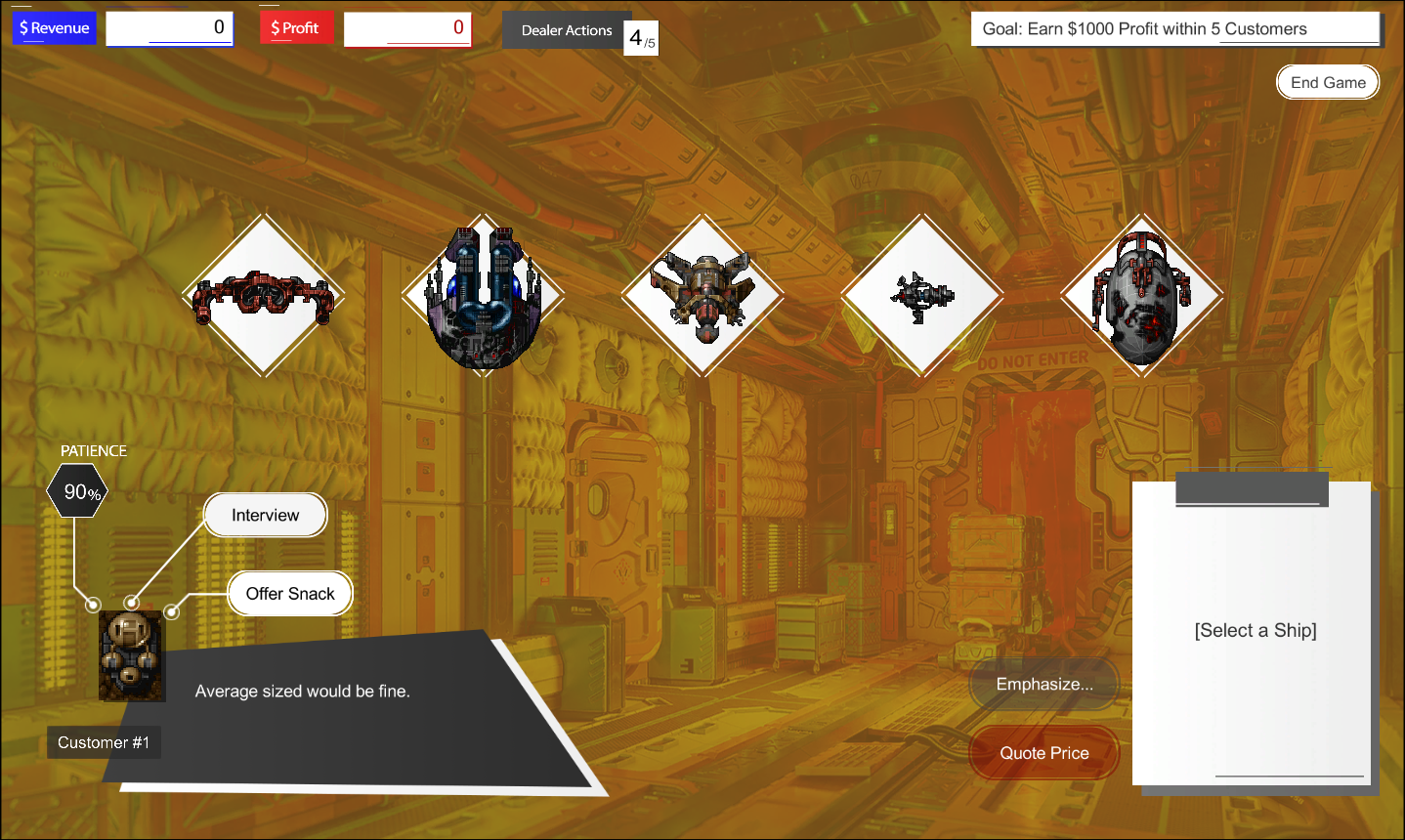

Exploring the Use of Large Language Model-Driven Chatbots in Virtual Reality to Train Autistic Individuals in Job Communication Skills

2024, Extended Abstracts of the 2024 CHI Conference on Human Factors in Computing Systems

Paper | Presentation | Video

CHI EA'23

Exploring the Use of the SoundVizVR Plugin with Game Developers in the Development of Sound-Accessible Virtual Reality Games

2023, Extended Abstracts of the 2023 CHI Conference on Human Factors in Computing Systems

Paper | Presentation | Video | Source Code

CHI’23

Haptic-Captioning: Using Audio-Haptic Interfaces to Enhance Speaker Indication in Real-Time Captions for Deaf and Hard-of-Hearing Viewers

2023, Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems

ASSETS’22

SoundVizVR: Sound Indicators for Accessible Sounds in Virtual Reality for Deaf or Hard-of-Hearing Users

2022, Conference on Computers and Accessibility (ASSETS’22)

Paper | Video | Source Code

Frameless Journals (Abstract)

VR Sound Mapping: Make Sound Accessible for DHH People in Virtual Reality Environments

2021, Frameless Journals Vol. 4 (Research Abstract)

Research Projects

Creative Works

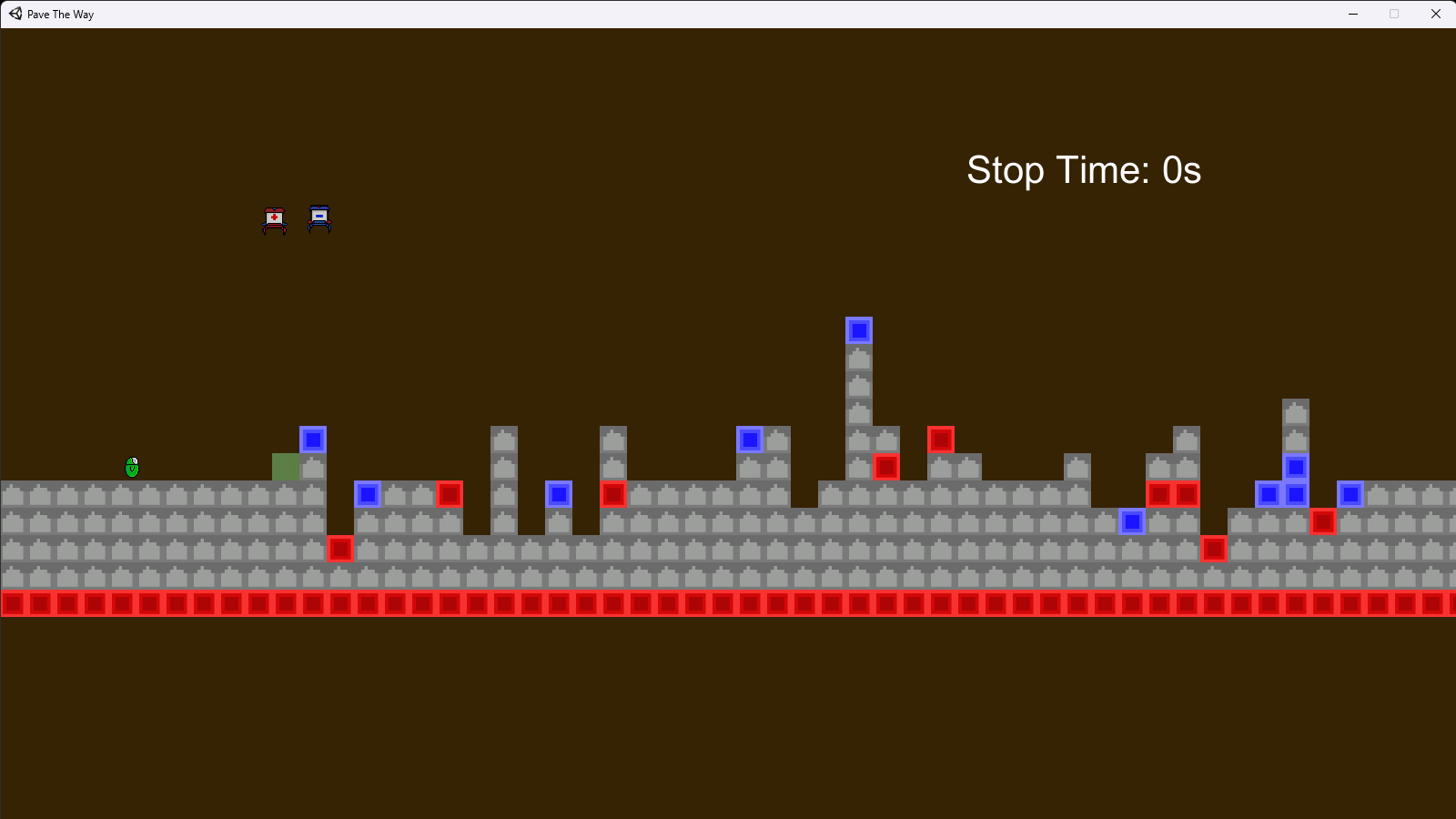

Non-academic creative projects